# Part 1: Enter your code below to import Pandas according to the

# conventional method. Then load the dataset into a Pandas dataframe.

# Write any code needed to explore the data by seeing what the first few

# rows look like. Then display a technical summary of the data to determine

# the data types of each column, and which columns have missing data.Data Exploration 03

You’re working on an exhibit for a local museum called “The Titanic Disaster”. They’ve asked you to analyze the passenger manifests and see if you can find any interesting information for the exhibit.

The museum curator is particularly interested in why some people might have been more likely to survive than others.

Part 1: Import Pandas and load the data

Remember to import Pandas the conventional way. If you’ve forgotten how, you may want to review Data Exploration 01.

The dataset for this exploration is stored at the following url:

https://raw.githubusercontent.com/byui-cse/cse450-course/master/data/titanic.csv

There are lots of ways to load data into your workspace. The easiest way in this case is to ask Pandas to do it for you.

Initial Data Analysis

Once you’ve loaded the data, it’s a good idea to poke around a little bit to find out what you’re dealing with.

Some questions you might ask include:

- What does the data look like?

- What kind of data is in each column?

- Do any of the columns have missing values?

pip install lets-plotCollecting lets-plot Downloading lets_plot-4.6.2-cp311-cp311-manylinux_2_17_x86_64.manylinux2014_x86_64.whl.metadata (11 kB) Collecting pypng (from lets-plot) Downloading pypng-0.20220715.0-py3-none-any.whl.metadata (13 kB) Collecting palettable (from lets-plot) Downloading palettable-3.3.3-py2.py3-none-any.whl.metadata (3.3 kB) Downloading lets_plot-4.6.2-cp311-cp311-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (3.4 MB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 3.4/3.4 MB 34.1 MB/s eta 0:00:00 Downloading palettable-3.3.3-py2.py3-none-any.whl (332 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 332.3/332.3 kB 22.4 MB/s eta 0:00:00 Downloading pypng-0.20220715.0-py3-none-any.whl (58 kB) ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 58.1/58.1 kB 4.2 MB/s eta 0:00:00 Installing collected packages: pypng, palettable, lets-plot Successfully installed lets-plot-4.6.2 palettable-3.3.3 pypng-0.20220715.0

import pandas as pd

import numpy as np

from pandas.plotting import scatter_matrix

from lets_plot import *

LetsPlot.setup_html()

url = "https://raw.githubusercontent.com/byui-cse/cse450-course/master/data/titanic.csv"

df = pd.read_csv(url)Part 2: Initial Exploration

Using your visualization library of choice, let’s first look at some features in isolation. Generate visualizations showing:

- A comparison of the total number of passengers who survived compared to those that died.

- A comparison of the total number of males compared to females

- A histogram showing the distribution of sibling/spouse counts

- A histogram showing the distribution of parent/child counts

df| PassengerId | Survived | Pclass | Name | Sex | Age | SibSp | Parch | Ticket | Fare | Cabin | Embarked | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | No | 3 | Braund, Mr. Owen Harris | male | 22.0 | 1 | 0 | A/5 21171 | 7.2500 | NaN | S |

| 1 | 2 | Yes | 1 | Cumings, Mrs. John Bradley (Florence Briggs Th... | female | 38.0 | 1 | 0 | PC 17599 | 71.2833 | C85 | C |

| 2 | 3 | Yes | 3 | Heikkinen, Miss. Laina | female | 26.0 | 0 | 0 | STON/O2. 3101282 | 7.9250 | NaN | S |

| 3 | 4 | Yes | 1 | Futrelle, Mrs. Jacques Heath (Lily May Peel) | female | 35.0 | 1 | 0 | 113803 | 53.1000 | C123 | S |

| 4 | 5 | No | 3 | Allen, Mr. William Henry | male | 35.0 | 0 | 0 | 373450 | 8.0500 | NaN | S |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 886 | 887 | No | 2 | Montvila, Rev. Juozas | male | 27.0 | 0 | 0 | 211536 | 13.0000 | NaN | S |

| 887 | 888 | Yes | 1 | Graham, Miss. Margaret Edith | female | 19.0 | 0 | 0 | 112053 | 30.0000 | B42 | S |

| 888 | 889 | No | 3 | Johnston, Miss. Catherine Helen "Carrie" | female | NaN | 1 | 2 | W./C. 6607 | 23.4500 | NaN | S |

| 889 | 890 | Yes | 1 | Behr, Mr. Karl Howell | male | 26.0 | 0 | 0 | 111369 | 30.0000 | C148 | C |

| 890 | 891 | No | 3 | Dooley, Mr. Patrick | male | 32.0 | 0 | 0 | 370376 | 7.7500 | NaN | Q |

891 rows × 12 columns

# Part 2: # Write the code needed to generate the visualizations specified.\

survive = ggplot(df, aes(x='Survived')) +\

geom_bar()

survivegender = ggplot(df, aes(x='Sex')) +\

geom_bar()

gendersibspo = ggplot(df, aes(x='SibSp')) +\

geom_histogram()

sibspoparch = ggplot(df, aes(x='Parch')) +\

geom_histogram()

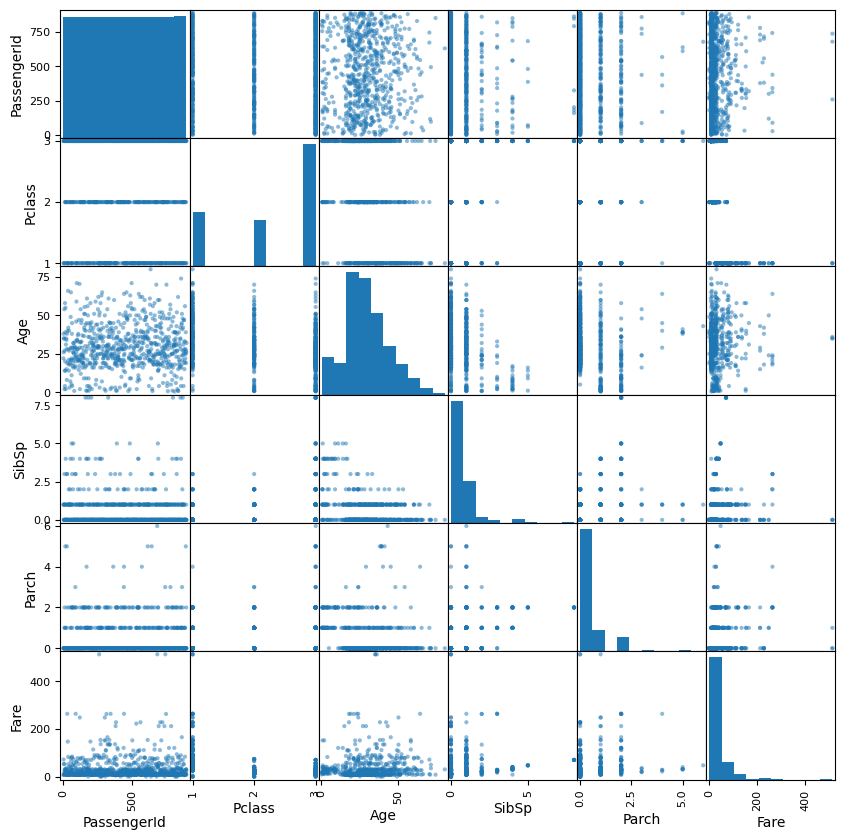

parchPart 3: Pairwise Comparisons

Use your visualization library of choice to look at how the survival distribution varied across different groups.

Choose some features that you think might have had some influence over the likelihood of a titanic passenger surviving.

For each of those features, generate a chart for each feature showing the survival distributions when taking that feature into account

# Write the code to explore how different features affect the survival distribution

scatter_matrix(df, figsize=(10,10))array([[<Axes: xlabel='PassengerId', ylabel='PassengerId'>,

<Axes: xlabel='Pclass', ylabel='PassengerId'>,

<Axes: xlabel='Age', ylabel='PassengerId'>,

<Axes: xlabel='SibSp', ylabel='PassengerId'>,

<Axes: xlabel='Parch', ylabel='PassengerId'>,

<Axes: xlabel='Fare', ylabel='PassengerId'>],

[<Axes: xlabel='PassengerId', ylabel='Pclass'>,

<Axes: xlabel='Pclass', ylabel='Pclass'>,

<Axes: xlabel='Age', ylabel='Pclass'>,

<Axes: xlabel='SibSp', ylabel='Pclass'>,

<Axes: xlabel='Parch', ylabel='Pclass'>,

<Axes: xlabel='Fare', ylabel='Pclass'>],

[<Axes: xlabel='PassengerId', ylabel='Age'>,

<Axes: xlabel='Pclass', ylabel='Age'>,

<Axes: xlabel='Age', ylabel='Age'>,

<Axes: xlabel='SibSp', ylabel='Age'>,

<Axes: xlabel='Parch', ylabel='Age'>,

<Axes: xlabel='Fare', ylabel='Age'>],

[<Axes: xlabel='PassengerId', ylabel='SibSp'>,

<Axes: xlabel='Pclass', ylabel='SibSp'>,

<Axes: xlabel='Age', ylabel='SibSp'>,

<Axes: xlabel='SibSp', ylabel='SibSp'>,

<Axes: xlabel='Parch', ylabel='SibSp'>,

<Axes: xlabel='Fare', ylabel='SibSp'>],

[<Axes: xlabel='PassengerId', ylabel='Parch'>,

<Axes: xlabel='Pclass', ylabel='Parch'>,

<Axes: xlabel='Age', ylabel='Parch'>,

<Axes: xlabel='SibSp', ylabel='Parch'>,

<Axes: xlabel='Parch', ylabel='Parch'>,

<Axes: xlabel='Fare', ylabel='Parch'>],

[<Axes: xlabel='PassengerId', ylabel='Fare'>,

<Axes: xlabel='Pclass', ylabel='Fare'>,

<Axes: xlabel='Age', ylabel='Fare'>,

<Axes: xlabel='SibSp', ylabel='Fare'>,

<Axes: xlabel='Parch', ylabel='Fare'>,

<Axes: xlabel='Fare', ylabel='Fare'>]], dtype=object)

Part 4: Feature Engineering

The museum curator wonders if the passenger’s rank and title might have anything to do with whether or not they survived. Since this information is embedded in their name, we’ll use “feature engineering” to create two new columns:

- Title: The passenger’s title

- Rank: A boolean (true/false) indicating if a passenger was someone of rank.

For the first new column, you’ll need to find a way to extract the title portion of their name. Be sure to clean up any whitespace or extra punctuation.

For the second new column, you’ll need to first look at a summary of your list of titles and decide what exactly constitutes a title of rank. Will you include military and eccelsiastical titles? Once you’ve made your decision, create the second column.

You may want to review prior Data Explorations for tips on creating new columns and checking for lists of values.

# Enter the code needed to create the two new columns

df['Title'] = df['Name'].str.extract(r',\s*([^\.]+)\.')

df

df.value_counts('Title')

def classify(title):

if title == 'Col':

return 'True'

elif title == 'Capt':

return 'True'

elif title == 'Major':

return 'True'

else:

return 'False'

df['Group'] = df['Title'].apply(classify)

df| PassengerId | Survived | Pclass | Name | Sex | Age | SibSp | Parch | Ticket | Fare | Cabin | Embarked | Title | Group | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 1 | No | 3 | Braund, Mr. Owen Harris | male | 22.0 | 1 | 0 | A/5 21171 | 7.2500 | NaN | S | Mr | False |

| 1 | 2 | Yes | 1 | Cumings, Mrs. John Bradley (Florence Briggs Th... | female | 38.0 | 1 | 0 | PC 17599 | 71.2833 | C85 | C | Mrs | False |

| 2 | 3 | Yes | 3 | Heikkinen, Miss. Laina | female | 26.0 | 0 | 0 | STON/O2. 3101282 | 7.9250 | NaN | S | Miss | False |

| 3 | 4 | Yes | 1 | Futrelle, Mrs. Jacques Heath (Lily May Peel) | female | 35.0 | 1 | 0 | 113803 | 53.1000 | C123 | S | Mrs | False |

| 4 | 5 | No | 3 | Allen, Mr. William Henry | male | 35.0 | 0 | 0 | 373450 | 8.0500 | NaN | S | Mr | False |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 886 | 887 | No | 2 | Montvila, Rev. Juozas | male | 27.0 | 0 | 0 | 211536 | 13.0000 | NaN | S | Rev | False |

| 887 | 888 | Yes | 1 | Graham, Miss. Margaret Edith | female | 19.0 | 0 | 0 | 112053 | 30.0000 | B42 | S | Miss | False |

| 888 | 889 | No | 3 | Johnston, Miss. Catherine Helen "Carrie" | female | NaN | 1 | 2 | W./C. 6607 | 23.4500 | NaN | S | Miss | False |

| 889 | 890 | Yes | 1 | Behr, Mr. Karl Howell | male | 26.0 | 0 | 0 | 111369 | 30.0000 | C148 | C | Mr | False |

| 890 | 891 | No | 3 | Dooley, Mr. Patrick | male | 32.0 | 0 | 0 | 370376 | 7.7500 | NaN | Q | Mr | False |

891 rows × 14 columns

Revisit Visualizations

Now that you have the new columns in place. Revisit the pairwise comparison plots to see if the new columns reveal any interesting relationships.

# Enter the code needed to recheck the pairwise comparison.Part 5: Encoding

The museum has partnered with a data science group to build some interactive predicitive models using the titanic passenger data.

Many machine learning algorithms require categorical features to be encoded as numbers.

There are two approaches to this, label encoding (sometimes called factorization), and “one-hot” encoding.

Label Encoding

Label encoding creates numeric labels for each categorical value. For example, imagine we have a feature in the data called Pet with these values for the first five rows: ['Dog', 'Cat', 'Dog', 'Dog', 'Bird'].

We could create a new feature called Pet_Encoded where those values are represented as: [0, 1, 0, 0, 2]. Where 0 = Dog, 1 = Cat, and 2 = Bird.

In pandas there are two common ways to label encode a feature:

Method 1: factorize()

First, we could pandas’ factorize() method. It takes the series you want to encode as an argument and returns a list of two items.

The first item is an array of encoded values. The second is the set of original values.

# The factorize() method returns the new values and the originals in a list.

# So the [0] at the end indicates we want only the new values.

myData['Pet_Encoded'] = pd.factorize(myData['Pet'])[0]Method 2: Category Data Type

Every column in a pandas dataframe is a certain datatype. Usually, pandas infers which datatype to use based on the values of the column. However, we can use the astype() method to convert a feature from one type to another.

If we first convert a feature to the category datatype, we can ask pandas to create a new column in the data frame based on the category codes:

# Convert our column to the category type

myData['Pet'] = myData['Pet'].astype('category')

myData['Pet_Encoded'] = myData['Pet'].cat.codesWhichever method we choose, our machine learning algorithm could use the new Pet_Encoded feature in place of the Pet feature.

# Create a new column in the dataset called "Sex_Encoded" containing the

# label encoded values of the "Sex" columnOne-Hot Encoding

One problem with label encoding is that it can make a categorical variable appear as if it contains a quantitative relationship between its values.

In the example above, is Bird twice as important as Cat? Some algorithms might interpret those values that way.

One-Hot encoding avoids this problem by creating a new feature for each category. The value of the new feature is either 0 (is not this value) or 1 (is this value).

In pandas, we can use the get_dummies() method to deal with this problem:

myEncodedData = pd.get_dummies(myData, columns=['Pet'])In the case of our Pet example, the new features created by get_dummies() would be:

| Pet_is_Dog | Pet_is_Cat | Pet_is_Bird |

|---|---|---|

| 1 | 0 | 0 |

| 0 | 1 | 0 |

| 1 | 0 | 0 |

| 1 | 0 | 0 |

| 0 | 0 | 1 |

Notice that for our data, if Pet_is_Bird = 0 and Pet_is_Cat = 0, we know that the pet has to be a dog. So the Pet_is_Dog column contains redundant information. When this happens, we say that our data contains a multicollinearity problem.

To avoid this, we can tell get_dummies() that we want to get rid of one of the columns using the drop_first parameter:

myEncodedData = pd.get_dummies(myData, columns=['Pet'], drop_first=True)The main disadvantage to One-Hot encoding is that if the feature you’re encoding has a lot of different values, it can result in a lot of extra features. This can sometimes lead to poor performance with some types of algorithms.

# Use the pandas get_dummies() method to one-hot encode the Embarked column.Part 6: Conclusions

Based on your analysis, what interesting relationships did you find? Write three interesting facts the museum can use in their exhibit.

🌟 Above and Beyond 🌟

There appears to be a lot of different variations of similar titles. (such as abbreviations for Miss and Mademoiselle).

Scan through the different titles to see which titles can be consolidated, then use what you know about data manipulation to simplify the distribution.

Once you’ve finished, check the visualizations again to see if that made any difference.

The museum curator has room for a couple of nice visualizations for the exhibit. Create additional visualizations that are suitable for public display.